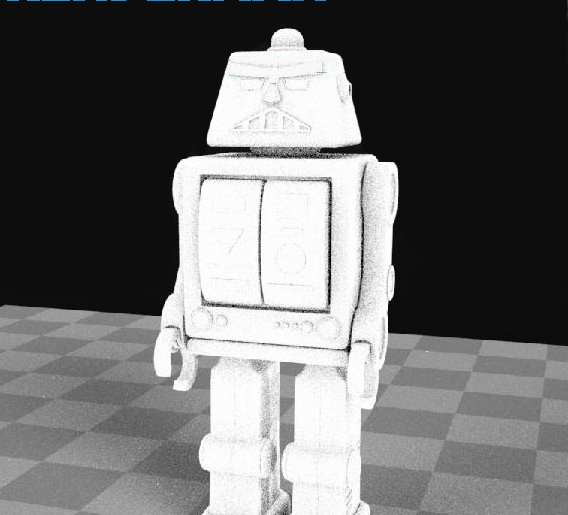

Turns out it's a 'feature' of the open source USD tools to rename certain Houdini attributes to the default USD attributes. If you export your geo to USD, you'll find your materials will break, that's less fun. You can use a pxrprimvar node to read in from your geo and pull it into a shader, that's fun. Min make fur look pretty good, a HD frame takes about 20 mins to render, which feels reasonable. The same screenshot gave a sense of what 'production' settings might be (the defaults are min -1 and max 0). Only found it via a screenshot in the renderman-for-houdini docs talking about something else its under the hider tab. The variance number was easy to find under the samples tab of the ris rop, but coudn't see the min/max samples anywhere.

RENDERMAN 20 HOW TO

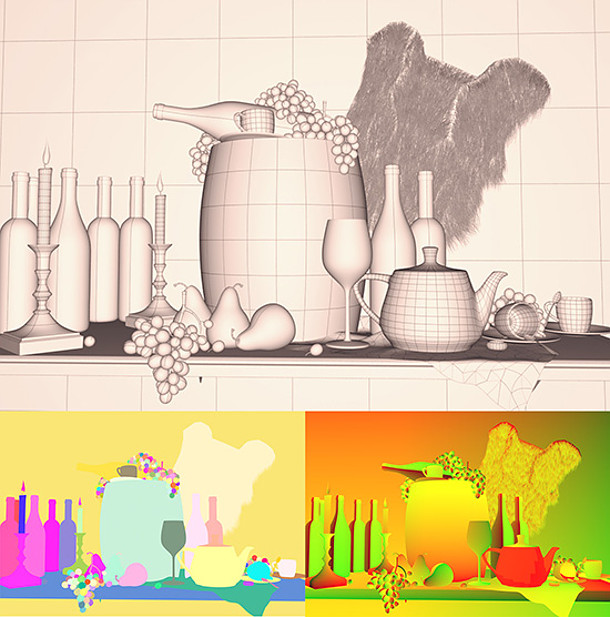

The docs state pixel variance threshold and min/max samples as how to drive quality, matching how most pathtracers these days do their thing. The docs for the hair color shading node support some nice randomization features, which rely on having per hair The houdini hair and fur tools generate this for free, but it wouldn't work in my render tests.Īfter trying a few things I realised that has to be promoted from prim to point, and then exported using the same method as shown above. This has also been fixed in H17.5/Rman22, you no longer need to do this promote dance. DON'T DELETE THIS! Turns out that's the tag renderman uses to know what attributes to export and in what format. I'd kept the width attribute, but also deleted a easily missed detail attribute, rixlate. To my surprise the renders went haywire, hair curves now the thickness of tree trunks. I did this, then applied an attribute delete sop to tidy up the stuff I didn't need. This tells houdini to export the width attribute to renderman, and to make sure it understands the width can change along the primitive length. Create an attribute rename sop, switch to the renderman tab I've never noticed before, rename width to width, and set the mode to 'varying float'. The renderman docs are pretty clear about how to render curves, hair, fur. Rixlate? An anti dandruff treatment? Maybe. Pretty sure this isn't needed anymore for 17.5/Rman22.Ħ00,000 hairs, PxrHairColor and PxrMarschnerHair, 3 seconds to export the geo to renderman, 12 seconds to render at 1280x720

And this is prman21, can't wait to try prman22.

RENDERMAN 20 FULL

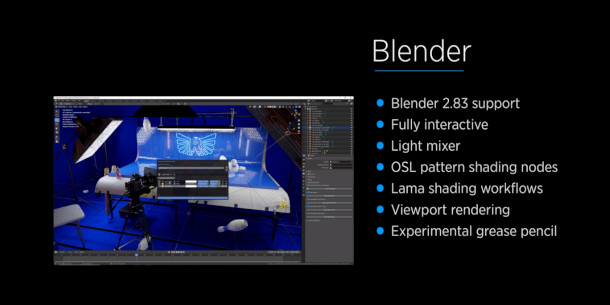

And my word, it's fast, and does full gi emission against other objects with no extra effort.

So here, I had the remapped density and remapped emission plugged into the inputs, and the expression is simplyĪ nice feature of this setup is there's an exposure multiplier on the blackbody node very easy to dial the look up and down.

In the end the answer was embarrassingly simple don't use semicolons, and the final line of your expression becomes the assigned value. Easy to make the node, but spent 45 minutes shouting at it when it didn't do what I wanted. As far as I can tell, you're meant to use SeExpr to do this sort of stuff. To my surprise there's no pxr mult, pxr add etc nodes. I figured if I could multiply the emission values against density, that would clip it back and look correct. Unfortunately this made all the low density areas of the volume glow red the blackbody node doesn't seem to want to go down to pure black values. The second is fed to pxr blackbody node, and then to emission. The high level idea is to take the incoming density (using a Pxr Prim Var node, NOT a default Houdini bind!), and remap it twice once to sit in a nice 0-1 range for density, and then into values that correspond to blackbody values (roughly 1000 to 6000).

I then totally forgot we'd have to match that in Renderman for the lighting students using Katana, here's the results of that test. Tests inside Mantra looked great, so pushed that workflow to the studio. Had seen a few people get great results by mapping density directly into emission for volumes.